Introduction

Understanding the concepts of training data, validation data, and test data is important in machine learning. In the world of machine learning, data reigns supreme. Data must be properly segmented to ensure optimal results for machine learning models. The trio of training data sets, validation data sets, and test data sets, play an important role in shaping your machine learning model.

It is important to understand the differences between training data, validation data, and test data. Further, you need to understand how your training data set, validation data set, and test data work together to optimize your machine learning models.

Data and machine learning

Machine learning (ML) is a branch of artificial intelligence (AI) that uses data and algorithms to mimic real-world situations. Machine learning helps you forecast, analyze, and study human behaviors and events. Machine learning helps you understand customer behaviors, spot process-related patterns, and operational gaps.

Machine learning also helps you predict trends and developments. Constructing a machine learning algorithm depends on how it will collect data. In this process, information is categorized into three types of data:

- Training data.

- Validation data.

- Test data.

What is training data?

Training data is used to train a machine learning model to predict an expected outcome. A training dataset is used to train machine models to predict expected outcomes like churn, sales lead scoring, or a time series forecast. The algorithm’s design focuses on the outcome of the expected or predicted result. Training data is the dataset used to train the model. Training data is what the model uses to learn and eventually predict results. Training data teaches an algorithm to extract relevant aspects of the outcome. A training set is often used to make a program understand how to apply different features, aspects, and technologies. Here is an example of training data for predicting sales lead conversion:

The model uses features (columns) to train on the outcome (target variable: “converted – YES/NO”).

Training the model requires running the training data and comparing the result with the target or expected outcome. Using the comparison as a guide, the model’s parameters are adjusted until the desired target is reached.

What is validation data?

Validation data is used to check the accuracy and quality of the model used on the training data. A validation dataset does not teach a model. Validation sets are used to reveal biases, so that the model can be adjusted to produce unbiased results. A validation set indirectly affects a machine learning model. Your validation set is used several times to validate the machine learning model’s outputs or outcomes. Validation sets are also known as a development set, as it is only used during machine learning model development.

What is test data?

Test data is used to perform a realistic check on an algorithm. Test data, also known as a testing set, or test set, confirms if the machine learning model is accurate. Once the machine learning model is confirmed as accurate, it can be used for predictive analytics. Test data is similar to validation data. Unlike validation data, test sets are only used once on the final model.

Here’s an example of a test set for predicting sales lead conversion:

As we know what an outcome should be (Converted YES or NO), we can see the model performance and accuracy. Machine learning models count how many times the model correctly predicts the target outcome (“Converted”).

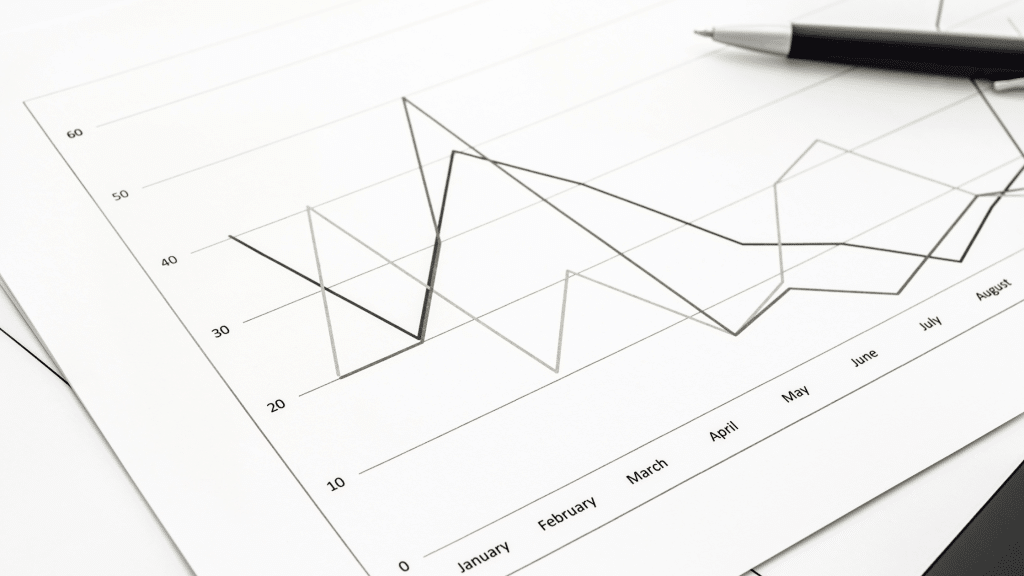

Data splitting for training and testing your machine learning model

Teaching a machine learning model will mean undertaking data splitting. You will need to denote which type of data you are working with: training data, validation data, or test data. Teaching your machine learning model requires data splitting into two primary datasets: training data and test data. Data splitting ensures that an algorithm model can help analysts find features or aspects that include an outcome or result. The standard data splitting approach uses the Pareto principle. The Pareto principle is also known as the 80:20 rule. The Pareto principle states that 80% of effects come from 20% of causes. The 80:20 rule can be applied to your data splitting as it is a reliable way to assess data. Your data splitting approach should:

- Use 80% of your data as training data.

- Use the remaining 20% of your data as testing data.

Why do we split data in machine learning?

We split data in machine learning to assess our machine learning model’s performance. Dataset splits are especially important if your machine learning model does not have expected outcomes or results.

Best practice guidelines for test data

We outline key best practices for test data. Your test data should:

- Be large enough to yield statistically meaningful results.

- Be representative of your dataset as a whole.

- Match your training data, in terms of characteristics.

Best practice guidelines for training data

We outline best practices for training data. With your training data, you should:

- Avoid target leakage: Your training data should only include data that is related to the expected outcome. Target leakage occurs when a variable used in the model is not a factor in attaining the target result. It happens when the model uses data that is not available or is considered unseen data.

- Prevent training-serving skew: Ensure no changes are made to the training data, the final testing data set, and the serving pipelines. Skews occur when the data used undergoes changes from when it was used for training to when it was served.

- Use time signals: If you expect a pattern to shift over time, you need to provide the algorithm with time signal information to adjust to the pattern shift.

- Include clear information where needed: If your dataset requires explicit explanation, include features that will let the algorithm understand that information clearly.

- Avoid bias: Ensure that your training data is representative of the potential data you will use to develop predictions.

- Provide enough training data: Your machine learning model’s performance may not fit your target output if you don’t have enough training data.

- Ensure it is based on reality: Your training data must mimic what happens in the real world. Training data can include CRM data, documents, numbers, images, videos, and transactions with features vital to your target result.

Your data must be properly segmented to ensure optimal results for your machine learning models.