Retail & Consumer Goods: Time Series Forecasting with External Drivers

The Business Question This Model Answers

How accurately can we forecast daily sales — and what happens when we enrich the model with context like promotions and holidays?

This wasn’t just about predicting demand. It was about trusting the forecast enough to make real decisions. Pricing. Inventory. Staffing. Campaigns.

We built two versions of the model.

- A baseline: clean, historical trend only.

- An improved version: same trend, now with real-world events included.

The difference wasn’t just visual.

It was measurable.

And it revealed where your data works — and where it doesn’t.

The Problem

Without a reliable sales forecast, retail operations drift into gut feel. Teams overstock “just in case.” Or under-plan during key demand windows. Either way, margin suffers.

The goal was simple: predict sales_amount daily, with enough lead time and accuracy to support planning — and trust that the model wouldn’t miss big swings.

We started with the simplest model. Then tested how much more we could explain by adding external signals.

The Data

We worked with a real sales dataset, structured like this:

| date | sales_amount | holiday | promotion |

|---|---|---|---|

| 01/01/2020 | 443 | 1 | None |

| 02/01/2020 | 318 | 0 | A |

| … | … | … | … |

Each row captured a day of sales, with two key drivers:

- Holiday: binary flag

- Promotion: categorical label (None, Promo_A, etc.)

We didn’t invent artificial signals. We used exactly what the business tracks: marketing activity and calendar effects.

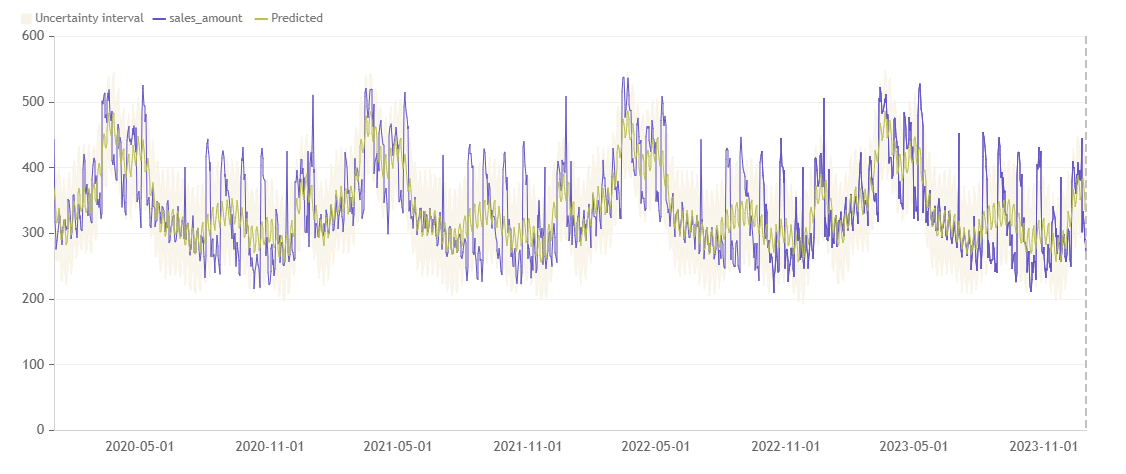

Baseline Forecast: As Good As It Gets Without Context

Our first model used only the historical trend. Seasonality patterns and smoothing were applied automatically.

📊 R-squared: 53.78%

📉 MAPE: 10.79%

The model captured the structure.

Weekly and monthly rhythms were clearly learned.

But it couldn’t explain spikes.And when the business asked “why was this week different?” — the model had no answer.

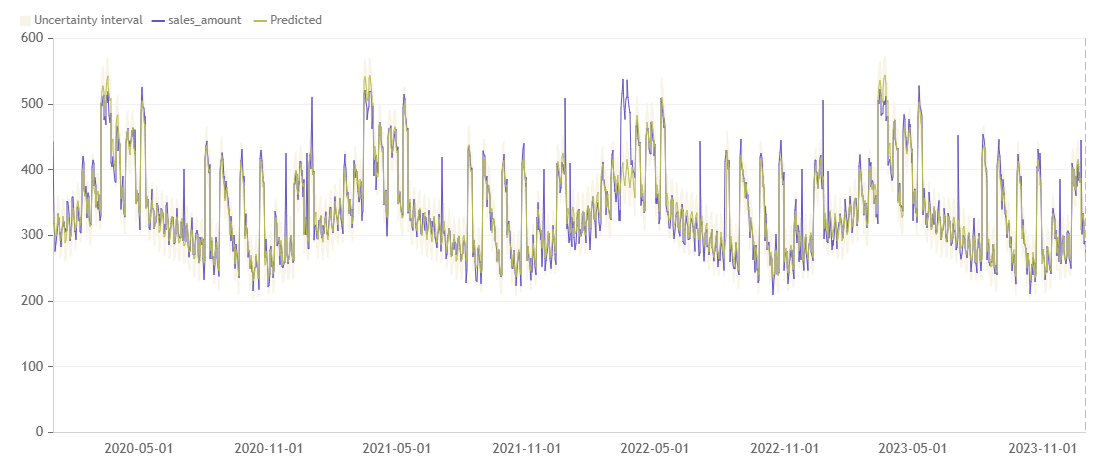

Forecast With Regressors: Now It Sees the Real World

Next, we added two drivers:

holidaypromotion

📊 R-squared jumped to 90.09%

📉 MAPE dropped to 3.58%

This is where the magic happened.

Now the model didn’t just follow history. It explained it.

Spikes aligned with promo weeks.

Dips aligned with holidays.

The model adjusted expectations dynamically — just like a planner would.

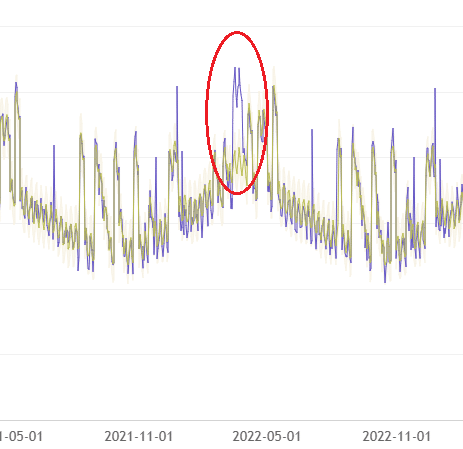

The Remaining Mystery: March 2022

But one moment stood out.

In March 2022, we observed a sharp spike in sales that wasn’t tied to any known promotion or holiday.

The baseline model missed it.

The regressor model also missed it — slightly less, but still underpredicted.

This was a signal: there are still drivers we’re not tracking.

Maybe it was a regional campaign.

Maybe a competitor ran out of stock.

Maybe weather, social media buzz, or a distributor push triggered it.

Whatever it was, it didn’t live in the current dataset.

And that’s the value: the forecast didn’t just give a number. It told us where we’re blind.

What We Learned

- A trend-based forecast is good. But it stops short of answering “why.”

- Adding regressors made the model smarter. It became context-aware.

- Unexplained anomalies highlight data gaps. These aren’t failures — they’re opportunities.

The Business Value

With the enriched forecast:

- Demand planning became more accurate

- Overstock and understock risk decreased

- Marketing saw real impact from campaigns

- Finance could run realistic what-if simulations

It wasn’t about fancy metrics. It was about turning sales history into a predictive, decision-ready signal — and knowing when to challenge it.

Conclusion

Forecasting isn’t just about drawing a curve through the past. It’s about learning from it.

Adding regressors made our model see like a business planner — not a statistician.

And when the model doesn’t know why something happened? That’s where the next question starts.

📅 Want to move beyond static forecasts?

Book a demo with Graphite Note:

https://graphite-note.com/book-demo